The Machine That Can't Explain Itself

Why AI adoption is outrunning accountability and what happens when the auditors arrive

The incident review starts the same way they all do now.

Twelve people on a Zoom. One shared screen. A timeline that looks clean until you zoom in.

“Who approved this change?”

Silence.

Not because nobody knows. Because everyone knows what the answer implies: whoever says the name also inherits the blast radius.

So the room does what modern rooms do. It talks around the problem. It blames “the AI.” It blames “the vendor.” It blames “lack of training.”

But the truth is uglier and simpler.

The work moved. Money moved. Access moved. And when the auditors ask why, your company can’t explain itself in human language.

That’s the new failure mode. Not hallucination. Not model drift. Not “AI ethics.”

Audit Opacity. I learned of this term recently, as I was preparing for a customer brief.

Audit opacity is the condition where an organization has adopted AI faster than it has built the ability to account for what that AI is doing, or why.

Eighteen months from now, this won’t be a rare incident. It will be the default state of any organization that delegated action faster than it built accountability.

The transparency cliff

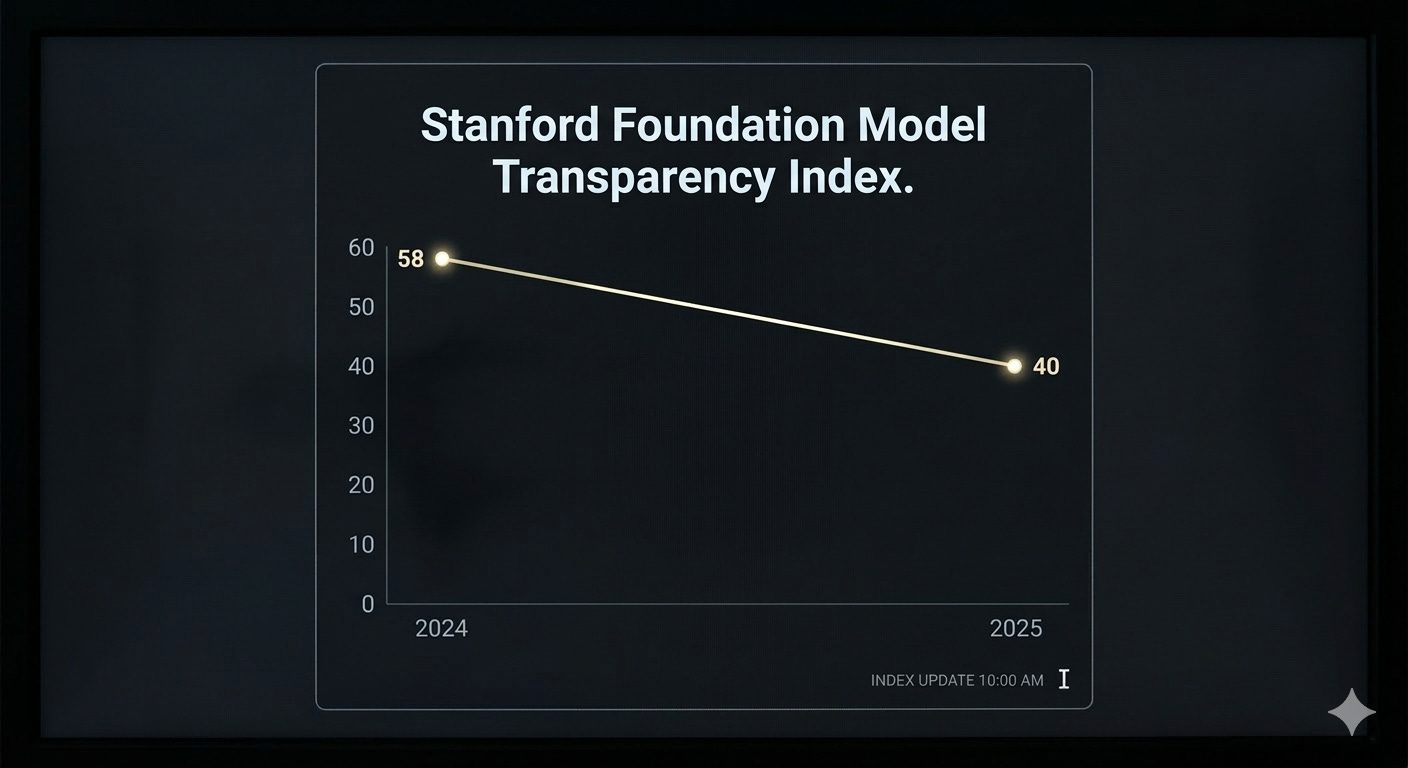

Every year, Stanford’s Institute for Human-Centered AI publishes its Foundation Model Transparency Index. A scorecard grading how well AI companies disclose what they build, how they build it, and what happens when people use it.

In 2024, the average score was 58 out of 100. Not great, but passable.

In 2025, the average dropped to 40.

That’s a 30% decline in one year. Not stagnation. Regression. The organizations building the most consequential technology on the planet became less explainable, not more.

Stanford’s researchers were blunt: the entire industry is systemically opaque on four critical dimensions: Training data, Training compute, How models are used, and the Resulting impact on society.

Read that list again. Those aren’t edge cases. Those are the four things you’d need to answer to explain anything about how your AI works.

And the gap is widening.

A mid-level ops leader is staring at Slack at 11:47 p.m.

A bot just posted: Resolved.

No ticket. No link. No note. Just that one word.

He scrolls up. There’s a thread of agent-to-agent chatter — summaries of summaries, decisions made in the passive voice, actions attributed to “the system.”

It feels efficient. It feels modern.

And it feels unownable.

He types the question nobody wants to type: “What changed?”

The bot replies instantly. Confident. Polished. Useless.

“Several updates were applied to improve outcomes.”

This is how the real problem arrives in enterprise: not with a bang, but with a clean status message that cannot be audited.

The tooling desert

You might assume someone has built the fix. Someone has a dashboard. Someone has an audit trail.

In 2025, researchers at the ACM CHI conference surveyed 435 AI audit tools — the largest inventory of its kind. Tools built by academics, startups, governments, nonprofits. The full ecosystem.

What they found is revealing not for what exists, but for what doesn’t.

Most tools focus on two things: standards compliance and performance analysis. These are the easy problems. They’re measurable, publishable, fundable.

But the hard problems harms discovery, audit communication, and transparency into how models actually behave in production — are almost completely unserved. The researchers described it as a structural gap in accountability infrastructure.

We have 435 tools, and almost none of them help you answer the question that ops leader typed at 11:47 p.m.

What changed?

ISACA, the global body that sets standards for IT governance, published its own warning in 2025, specifically about agentic AI. Their assessment: agentic systems make decisions with limited traceability, which weakens accountability and complicates regulatory compliance. The word they kept returning to was opacity.

Not a bug. Not a feature request. A structural condition.

On the earnings call, the CEO says the line investors want to hear:

“We’re an AI-first company.”

Applause. Relief. The stock steadies. The CEO and CFO answer all the analyst’s questions with ease and assurance.

Then on Monday morning, a director in finance opens the close checklist and realizes something quietly terrifying: half the work is no longer done by people. It’s done by helpers. Little automations. Prompt chains. Vendor copilots. The kind nobody files a ticket for, because filing tickets is slower than getting the answer.

The close is faster. The numbers look right.

But the company is becoming a machine that can’t explain how it thinks.

That’s the trade most executives are making without realizing it: speed for truth. Velocity for verifiability. And every quarter that passes with adoption outrunning accountability, the debt compounds.

A paper published this year in Social Studies of Science carries a title that should make every CTO uncomfortable: “It’s not a bug, it’s a feature: How AI experts and data scientists account for the opacity of algorithms.” The researchers found that technical leaders don’t treat opacity as a problem to solve. They treat it as a condition to manage. They rationalize it. They normalize it. They build culture around not knowing rather than building systems to find out.

This is how institutions become audit opaque. Not through negligence. Through optimization.

The parking brake

I run AI readiness diagnostics for organizations. In a recent engagement, three leaders at the same company completed the same assessment — an executive, a security and infrastructure leader, and a director-level operator closest to the customer.

The executive was confident. The operator was skeptical. The gap between them was wide enough that they weren’t describing the same company.

But the real finding wasn’t the spread. It was the convergence.

All three bottomed out on the same dimension: Measurement & Feedback. The ability to know whether what you’re building is actually working.

The infrastructure leader, the one closest to the engineering work, the strongest technical credentials in the room, rated the organization’s measurement capability as essentially nonexistent. The person who knows best how the thing is built is the one saying: we have no idea if it’s working.

That’s not a technology failure. That’s audit opacity in the bloodstream.

And here’s what makes it dangerous: the executive didn’t know. Not because he’s negligent. Because the feedback mechanisms that would surface this gap don’t exist yet.

Code was never the moat. The moat was always the stored process, the way work gets done, the way decisions get traced, the way accountability gets assigned. When that process is implicit, political, and fear-driven, AI doesn’t fix it.

AI scales it.

The regulatory bet

The policy world is starting to pay attention, but there’s a timing problem.

The AI Research, Innovation, and Accountability Act is pushing mandatory reporting requirements. The EU’s Digital Services Act is creating new audit obligations. ISO 42001 is building an AI management system standard. The OECD is publishing state-of-the-art guidance on algorithmic transparency.

All of it matters. None of it is fast enough.

The regulatory infrastructure assumes organizations can explain what they’ve deployed. But if the Stanford index is right and the trend line says it is the gap between what organizations are deploying and what they can explain about their deployments is growing, not shrinking.

Regulation is coming for a world that is becoming harder to regulate by the quarter.

Come back to today

Look at your rollout dashboard. The one that says complete.

If adoption is flat, the tool isn’t the problem. The parking brake is.

If your incident reviews end with “the AI did it,” you don’t have an AI problem. You have an ownership problem.

If your agents can resolve tickets but nobody can explain what changed, you don’t have automation. You have institutional amnesia.

And if you want AI to amplify performance, you first have to make your organization explainable. Not explainable in a boardroom deck. Not explainable in a vendor pitch. Explainable in the way that matters: when someone asks who is responsible, a human being can answer without flinching.

That’s the test.

Most organizations will fail it not because they’re bad at AI, but because they were already audit opaque before AI arrived. The technology just removed the last bits of friction that were hiding the gap.

The companies that win the next five years won’t be the ones that adopt AI the fastest.

They’ll be the ones that can explain what it did on Monday morning.

John Kwarsick is the founder of Kwarsick Consulting and the creator of THE WHEEL Framework, an eight-dimension AI readiness diagnostic. He works with leadership teams to identify the structural gaps that block AI adoption — and the measurement systems needed to close them.

If your organization is moving fast on AI and you’re not sure whether you can explain what it’s doing, that’s the conversation worth having. kwarsickconsulting.com